Apple, as usual, seems to be working to push the boundaries of its devices’ hardware further.

According to recent rumors from China, the Cupertino giant is reportedly considering introducing multi-spectral camera sensors for future generations of iPhone.

The news, shared by the well-known leaker Digital Chat Station on the platform Weibo, suggests concrete interest by the company in this technology, although caution about the timing of an actual market release is still warranted.

iPhone with a multi-spectral sensor: beyond human vision

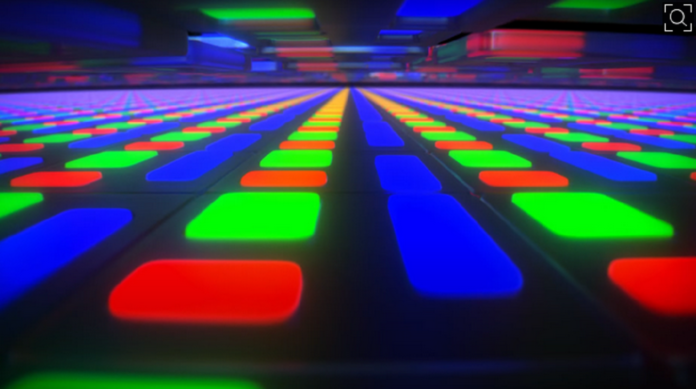

Conventional cameras, including those currently on flagship smartphones, operate by capturing light through red-, green-, and blue-sensitive receptors (RGB). By measuring the relative amount of light captured by each receptor, the image processor calculates the color value for each pixel; for example, equal signals from the red and blue receptors are interpreted as purple.

This system, while extremely precise and capable of generating millions of color variants, is limited to the visible light spectrum perceived by the human eye.

A multi-spectral camera, on the other hand, has the capability to detect frequencies beyond our natural perception, particularly infrared and ultraviolet bands.

This ability to “see the invisible” allows the sensor to collect a significantly larger amount of data about the framed scene, theoretically offering an image analysis depth that standard RGB sensors cannot match.

From satellites to smartphones: practical benefits

Historically, multi-spectral imaging was not developed for the consumer market. This technology was initially developed for military purposes, specifically for target identification, before finding applications in industrial and scientific sectors.

Today it is commonly used in weather satellites, in drones for monitoring agricultural crops, and even in the art world to identify counterfeit paintings or analyze underlying layers of paint. In industrial contexts, it is a key tool for quality control in automated production lines.

The integration of this technology into a pocket-sized device like the iPhone could bring tangible benefits, foremost superior color fidelity. Thanks to reading non-visible frequencies, camera software could balance colors with surgical precision, eliminating undesirable casts often caused by complex artificial lighting.

Moreover, manufacturers who have already experimented with this approach, such as Huawei, have cited notable improvements in low-light performance, leveraging the extra data to reconstruct details in shadowed areas.

Between skepticism and market reality

Despite the fascinating premises, it is proper to temper the hype. As also noted by colleagues at 9to5Mac, Apple’s interest in a technology does not automatically translate into a finished product in the short term. Digital Chat Station’s report specifies that Apple is currently evaluating the supply chain and that real testing has not yet begun.

Furthermore, previous Android-world experiences did not herald a miracle. When Huawei integrated similar sensors in some of its devices, reviews did not highlight the revolutionary qualitative leap that was hoped for, suggesting that the practical impact for the average user could be marginal relative to the costs of implementation.

Digital Chat Station has a good track record, but not infallible. Considering that Apple is exploring hundreds of technologies that never see the light of day, and given the still-embryonic nature of this rumor, it is likely we won’t see an iPhone with a ‘super-view’ multi-spectral in the immediate future.

Nevertheless, the mere fact that Cupertino is considering this path confirms the intention to leave nothing to chance in the race for the best mobile camera.